The Attribution Confidence Benchmark for Mid-Market SaaS

- Jason B. Hart

- Marketing Analytics

- April 24, 2026

- Updated May 4, 2026

Table of Contents

What Is the Attribution Confidence Benchmark?

The Attribution Confidence Benchmark is a practical way to decide whether your current attribution evidence is safe enough to use for the decision leadership wants to make.

That is different from asking whether you have the perfect attribution model.

Most mid-market SaaS teams do not get into trouble because they picked the wrong diagram in an attribution textbook. They get into trouble because a directional signal gets promoted into a budget argument, a board claim, or a software-buying justification before the evidence can carry that weight.

The report looks useful. The CRM has fields. The paid channels have conversions. The lifecycle dashboard has a sourced-pipeline view. Then the weekly conversation turns into a familiar operator mess:

- paid search looks efficient, but nobody agrees how much was branded demand capture

- campaign source exists on the lead, but not reliably on the opportunity

- RevOps trusts one lifecycle rule while marketing uses another

- finance asks whether the number ties to booked revenue, and the room starts adding caveats

- someone wants to buy attribution software before the team has named which part of the chain is actually weak

That is where this benchmark helps.

It does not promise perfect attribution. It gives the team a shared confidence language before the number gets used for a bigger decision than it deserves. If the benchmark exposes a service-level gap rather than a worksheet-level fix, the SaaS Marketing Attribution Services page shows how to turn the evidence into a scoped attribution engagement.

If you need the broader guide, start with Marketing Attribution for SaaS. If you need to see which reporting layer can prove what, use The Attribution Gap Map. If the immediate question is which first move to make, read Fix Instrumentation First vs. Fix Definitions First vs. Buy Attribution Software First. This page sits one layer below the decision: how confident should we be in the attribution evidence we already have?

Benchmark one decision, not the whole attribution system

Do not score “our attribution.”

That phrase is too broad to be useful.

Pick one decision the business is about to make and score whether the attribution evidence is strong enough for that use.

Good benchmark targets include:

- deciding whether a holdout test is ready before a paid-budget move

- reallocating paid budget between two channels

- deciding whether to scale or pause a campaign family

- explaining sourced pipeline in an executive review

- defending a channel contribution claim in board prep

- deciding whether attribution software is justified now

- deciding whether CRM or lifecycle cleanup should happen first

A useful benchmark sentence sounds like this:

We are testing whether our current attribution evidence is strong enough to move paid budget next month, or whether it is only safe for directional channel learning.

Now the scoring has a job.

A team can be directional enough to learn from channel movement and still not be budget-grade. It can be operating-grade for campaign triage and still not be safe for board narrative. That distinction prevents a lot of false confidence.

The four attribution confidence bands

Use four bands. More detail usually creates the illusion of precision without making the decision better.

| Confidence band | What it means | Safe uses | Unsafe uses |

|---|---|---|---|

| Cleanup-first | The evidence chain is too broken or contested to support the decision. | Identify the first trust break; prioritize capture, definition, or ownership repair. | Budget shifts, board claims, compensation logic, software selection based on the reported number. |

| Directional | The signal is useful for pattern-spotting, but the caveats are too large for hard decisions. | Trend checks, campaign learning, hypothesis building, early warning signals. | Permanent budget moves, pipeline-source claims, ROI certainty. |

| Operating-grade | The evidence is stable enough for recurring operating decisions with named caveats. | Weekly channel management, campaign prioritization, source-quality review, cleanup sequencing. | Board-grade narrative or major budget resets unless caveats are explicit. |

| Budget-grade | The evidence can support spend, planning, or leadership decisions because the chain is governed and repeatable. | Budget reallocation, forecast support, executive narrative, software-buying justification. | Claiming causal certainty the system still cannot prove. |

The bands are not moral judgments.

Directional is not bad. A directional attribution view can still be valuable when the team uses it honestly. The damage comes from treating it like budget-grade evidence because the dashboard looks polished or because the meeting needs a simple answer.

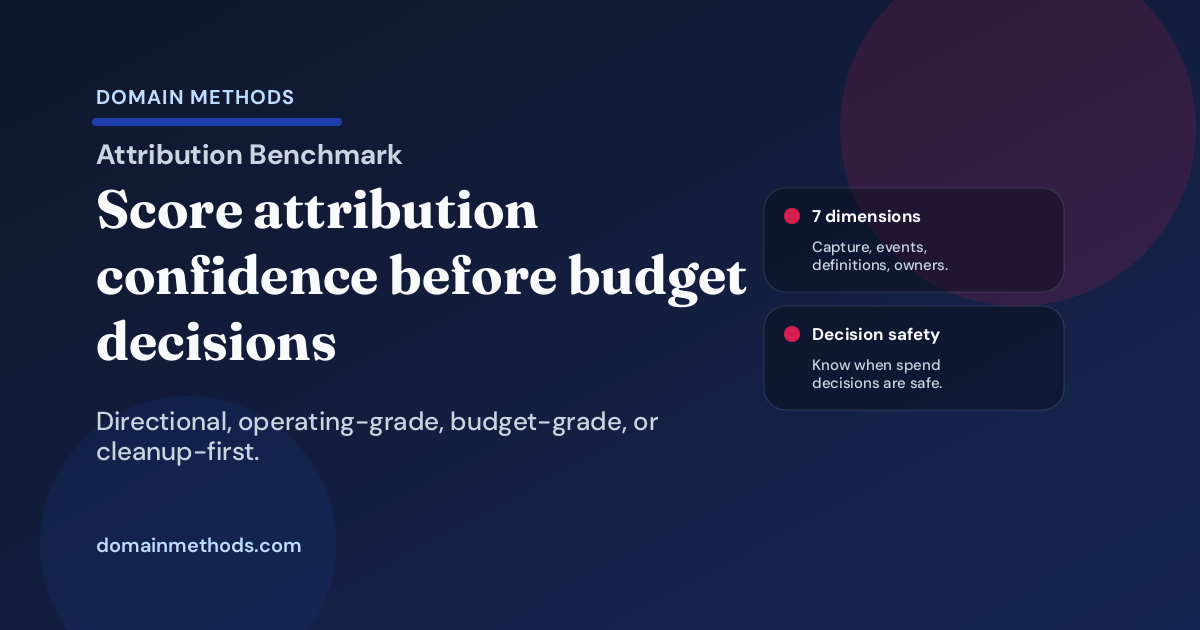

The seven dimensions to score

I would score seven dimensions. They are where attribution confidence usually breaks in real SaaS operating environments.

| Dimension | What you are testing | Weak-score signal |

|---|---|---|

| Source capture discipline | Whether UTMs, referrers, campaign fields, and original-source values survive into the systems that matter. | Paid and organic sources collapse into junk categories, direct traffic, or manually corrected fields. |

| Conversion and event coverage | Whether key hand raises, demo requests, trials, lifecycle events, and important assists are captured consistently. | The report sees the easy form fill but misses the event that actually changed the sales motion. |

| Lifecycle definition stability | Whether sourced, influenced, MQL, SQL, pipeline, and opportunity rules mean the same thing across teams. | The label stays the same while the stage logic or inclusion rule quietly changes. |

| Account and opportunity linkage | Whether lead and contact history connect cleanly to account, opportunity, pipeline, and revenue outcomes. | Attribution works at the lead layer but breaks once the buying committee and opportunity enter the room. |

| Revenue connection | Whether attribution can tie to booked, pipeline, or forecasted revenue at the right confidence level. | Marketing has activity proof, RevOps has opportunity proof, and finance still cannot reconcile the story. |

| Caveat clarity | Whether the team knows what each channel, model, and source path can and cannot prove. | Every report comes with verbal caveats that never make it into the decision artifact. |

| Owner and change-control discipline | Whether someone owns source rules, lifecycle changes, exception handling, and reporting changes. | Attribution confidence depends on whoever remembers the workaround this week. |

The operator detail that matters: weak attribution rarely fails in only one place.

A team may have decent UTMs but poor opportunity linkage. Or good CRM linkage with unstable lifecycle definitions. Or strong event coverage with no clear owner for exceptions. The benchmark is useful because it shows which break matters first for the current decision.

How to score the benchmark

Score each dimension from 1 to 3.

| Score | Meaning | Practical test |

|---|---|---|

| 1 | Strong | The rule is explicit, repeatable, and trusted under normal reporting pressure. |

| 2 | Fragile | The rule mostly works, but depends on caveats, manual review, or a few operators knowing the context. |

| 3 | Weak | The rule is missing, contested, manually rebuilt, or unreliable when the decision matters. |

Then total the seven dimensions.

| Total score | Confidence band | What to do next |

|---|---|---|

| 7-9 | Budget-grade | Use the evidence for the named decision, but document caveats and change controls. |

| 10-14 | Operating-grade | Use it for recurring operating decisions; avoid overclaiming in board or major-budget contexts. |

| 15-18 | Directional | Use it for learning and pattern-spotting; fix the weakest dimension before hard decisions. |

| 19-21 | Cleanup-first | Do not use the number for the decision yet; pick the first upstream trust repair. |

This is not a scientific survey. It is a working-session benchmark.

The point is to stop arguing about whether attribution is “good” and start naming exactly what the current evidence can safely support.

What each confidence band allows you to do safely

Cleanup-first

Cleanup-first means the team should not use attribution evidence as the basis for the requested decision yet.

The signals are usually obvious once someone slows the room down:

- original source is missing or overwritten for too many records

- demo-request and trial events do not line up with CRM lifecycle movement

- sourced and influenced pipeline mean different things depending on which team built the report

- opportunity linkage is so spotty that the biggest deals are explained manually

- the attribution view changes materially between reporting cycles without a documented reason

The first move here is not another model. It is trust repair.

If source capture is the break, fix instrumentation. If definitions are drifting, settle the lifecycle and revenue rules. If nobody owns the exception path, name the owner before the next reporting cycle. If the business keeps treating platform numbers like revenue truth, use the Attribution Gap Map to reset which layer is allowed to answer which question.

Directional

Directional evidence is useful. It is just not decisive.

This is the band where many SaaS teams live longer than they want to admit. You can see movement. You can spot channel changes. You can identify obvious data-quality problems. You can learn which campaigns deserve closer review.

But you should not pretend the evidence can settle a budget fight by itself.

The operator tell is that the report is useful until someone asks for a permanent action. Then the room starts adding caveats:

- “Paid search is probably doing well, but branded demand is mixed in.”

- “This channel looks strong, but source capture changed last month.”

- “The campaign influenced pipeline, but the opportunity linkage is incomplete.”

- “That dashboard is directionally right, but finance will not use it for the final answer.”

Those caveats are not a failure if they are visible. They are a failure when they disappear from the decision.

Operating-grade

Operating-grade attribution is strong enough for recurring management.

That means the team can use it to prioritize campaign work, spot source-quality issues, review channel mix, and decide which cleanup tasks deserve attention before the next cycle.

It usually has named caveats, not hidden caveats.

A marketing leader can say, “This channel is safe to manage weekly against qualified pipeline movement, but we are not using it as final board-grade ROI proof until finance reconciliation and opportunity linkage improve.”

That sentence is not weakness. It is good operating hygiene.

Operating-grade is often the most useful near-term target. Many teams do not need to jump straight to board-grade attribution. They need attribution that can support weekly decisions without sending the business into another truth debate.

Budget-grade

Budget-grade attribution can support spend decisions because the evidence chain is stable enough to survive leadership pressure.

The team can explain:

- where the source evidence enters

- which lifecycle and revenue definitions apply

- how leads, contacts, accounts, and opportunities connect

- what the model can and cannot claim

- who owns changes and exceptions

- what changed since the last reporting cycle

Budget-grade does not mean causal certainty.

It means the evidence is good enough for the decision and the remaining caveats are explicit. That is a much higher bar than “the dashboard loaded” or “the attribution tool says the channel worked.”

What the benchmark does not prove

This benchmark does not prove that one channel caused revenue.

It does not replace incrementality testing, media mix modeling, experiment design, or a real attribution implementation. It will not make platform reporting tell the full revenue story. It will not rescue a CRM workflow that drops source context before opportunities are created.

It does something narrower and more useful for most mid-market SaaS teams:

It tells you whether the attribution evidence is trustworthy enough for the decision you are about to make.

That honesty matters. Teams waste money when they skip it.

They buy attribution software when capture and definitions are still broken. They cut channels because a directional report looked cleaner than it deserved. They defend budget with caveats that never made it into the executive packet. They ask the data team for a more advanced model when the real issue is that nobody owns source standards or lifecycle changes.

The benchmark is there to prevent that sequence.

How to use the worksheet in a working session

Use the worksheet with one decision, one metric family, and the people who can actually fix the next break.

A practical 20-minute session looks like this:

- Name the decision. Are you reallocating budget, explaining pipeline, evaluating software, or choosing a cleanup sequence?

- Score each dimension. Do not debate perfection. Score the evidence as it behaves under current reporting pressure.

- Assign the band. Cleanup-first, directional, operating-grade, or budget-grade.

- Name the unsafe use. Write the decision this evidence should not support yet.

- Pick the first fix. Choose the smallest repair that would move the confidence band before the next review.

That last step is where the benchmark earns its keep.

If the score is weak because source capture is broken, the next move is not a model debate. If the score is weak because lifecycle definitions keep drifting, the next move is not another dashboard. If the score is weak because nobody owns exceptions, the next move is not a nicer attribution interface.

It is the first confidence fix.

Download the Attribution Confidence Benchmark Worksheet (PDF)

Score the seven confidence dimensions, assign a practical band, name the unsafe use, and choose the first cleanup move before attribution evidence drives a budget or leadership decision.

Instant download. No email required.

Want future posts like this in your inbox?

This form signs you up for the newsletter. It does not unlock the download above.

When to use this before buying attribution software

Use this benchmark before buying software when the room sounds impatient.

That impatience is understandable. Attribution arguments are expensive. Nobody wants another quarter of circular conversations about source, influence, pipeline, and revenue.

But software is a multiplier. It multiplies the operating system underneath it.

If capture is weak, software inherits weak capture. If lifecycle definitions are unstable, software encodes unstable definitions. If opportunity linkage is patchy, software gives the gap a better interface. If nobody owns exceptions, software creates a new place for the exception to hide.

The benchmark does not argue against attribution tools. It argues for buying them when the evidence chain is ready enough to benefit from them.

A budget-grade or strong operating-grade score may support a software evaluation. A directional or cleanup-first score usually says the first move belongs in instrumentation, definitions, linkage, or ownership.

The practical takeaway

Attribution confidence is not binary.

That is the whole point.

A mid-market SaaS company can have attribution evidence that is useful for weekly learning, dangerous for budget decisions, and nowhere near ready for board narrative. Another team can have stable source capture and opportunity linkage but still overclaim because caveats never make it into the decision artifact.

The benchmark gives the room a better sentence:

This attribution view is operating-grade for campaign management, but not budget-grade until opportunity linkage and caveat handling improve.

Or:

This is cleanup-first. The number should not drive spend until source capture and lifecycle definitions stop moving under it.

Those sentences are less exciting than a perfect attribution model.

They are also much more useful when real money is on the line.

Download the Attribution Confidence Benchmark Worksheet (PDF)

A lightweight worksheet for scoring attribution evidence, assigning a confidence band, and naming the first cleanup move before budget or leadership decisions rely on the number.

DownloadIf the spend story still cannot survive leadership questions

Where Did the Money Go?

Use the diagnostic when attribution, CRM, and revenue reports all sound plausible but cannot produce one spend story the business can defend.

See the spend diagnosticIf attribution confidence breaks because teams define revenue differently

Three Teams, Three Numbers

Use the diagnostic when the attribution argument is really a cross-functional metric disagreement between marketing, RevOps, sales, finance, and data.

See the metric-alignment diagnosticSee It in Action

Common questions about attribution confidence

How is this different from an attribution model comparison?

How is this different from the Attribution Gap Map?

Can attribution be operating-grade but not budget-grade?

What is the clearest sign attribution is still cleanup-first?

About the author

Jason B. Hart

Founder & Principal Consultant

Helps mid-size SaaS companies turn messy marketing and revenue data into decisions leaders trust.